Reducing your AI bias with synthetic data

In the latest days, countries have been assaulted by manifestations around a topic that we do not always give the attention we should: inequalities and discrimination in our society towards black people. Regardless of whom this discrimination is targeted, one thing is for sure, it exists!! But how does that link to data an AI? With (algorithmic) bias! For that reason, today we will focus on how to deal with bias in datasets, with synthetic data.

The dataset

For today’s topic, I guess there could not exist a better dataset than the Census dataset (you can easily find it in Kaggle). This data was collected by the census bureau from the USA and its extraction was done in 1994 (although probably not the most updated in what concerns demographics). The overall goal for this dataset is to classify whether a person will have an income over 50k a year.

The dataset contains 41 variables, from which 32 are categorical, with a number of around 30 observations. A variable to pay attention to is “race” — in this dataset are represented the following categories for this variable: White, Black, Asian or Pacific Islander, Amer Indian Aleut or Eskimo, and Others. For the purpose of this exercise, we will keep only two of the above “White” and “Black”. As you can observe in the below image, the dataset imbalanced:

Race category highly imbalanced in the Census dataset

The white individuals of the dataset represents around 87% of the total dataset, whereas the black individuals account for only 13%. With a ration of 4 to 1 white individuals to black, this can have a huge impact on the models trained using the dataset, as they will tend to learn the characteristics of the white individual’s population, which can result in poor diagnoses for any other underrepresented race. As we were not able to collect more records at this point, how can we achieve an equal representation in order to reduce the bias present in the input data as much as possible?

Synthetic data to the rescue!

How can I use Synthetic Data to solve my dataset biases issues?

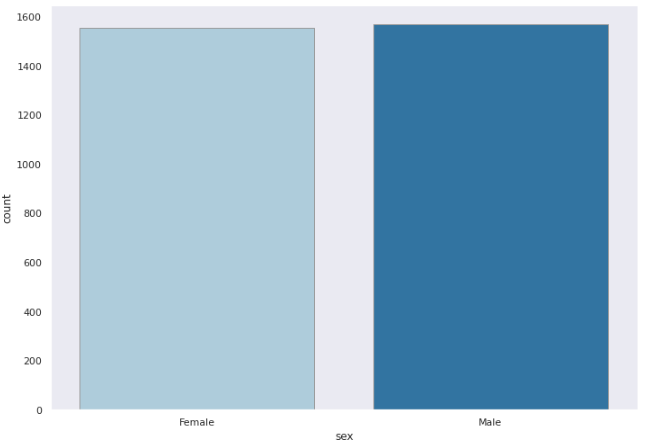

In order to solve the bias, we will generate synthetic data from the black population, for that purpose, we will use YData’s synthetic data generator lib. Before we proceed, let’s just do a double-check for the “sex” variable:

Sex variable ratio between Female/Male individuals

In the subset of the population, we can observe that both males and females are equally represented. Well, now that we’ve the population that we are looking to generate 3000 new samples, to augment our training data, we are good to go and use YData’s synthetic data lib.

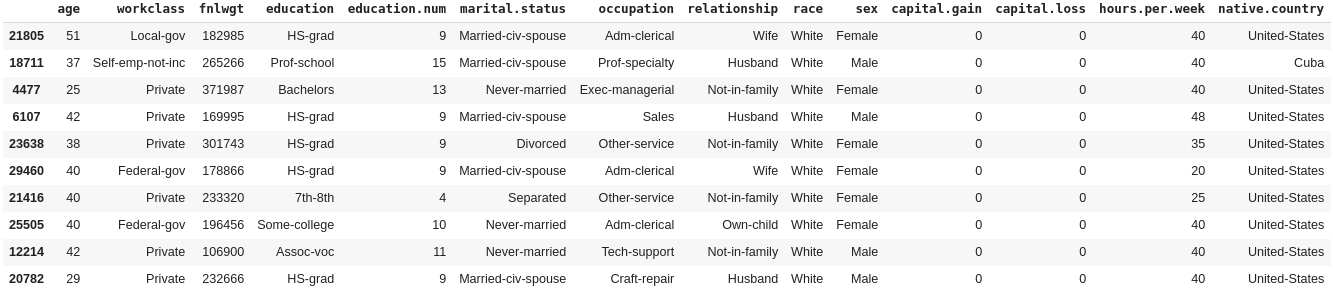

The adult census dataset

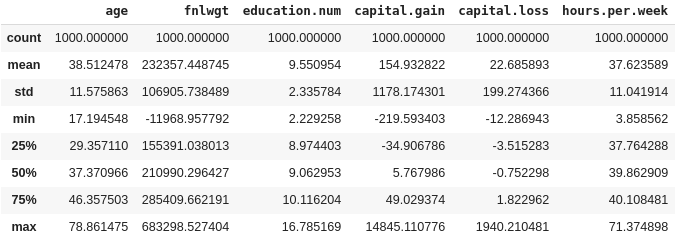

The synthetic data model is trained quite fast (less than 1 minute) — in this case, we are dealing with a pretty small amount of data with a few variables, we have already achieved synthetic data in a good shape with a score around 98,78% percent.

General stats from the new 1000 generated samples

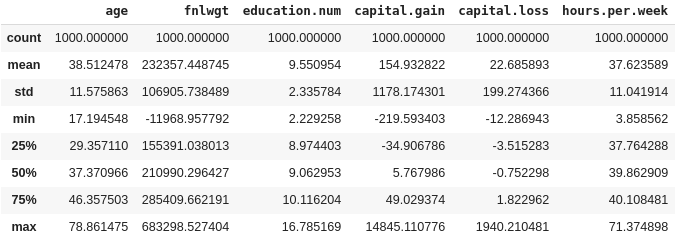

The final results

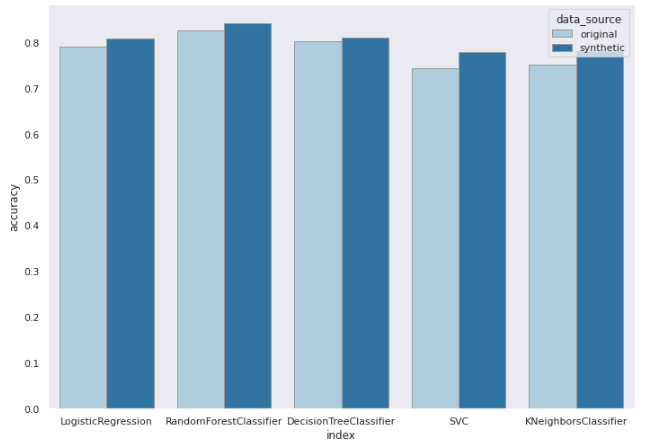

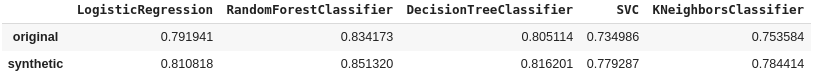

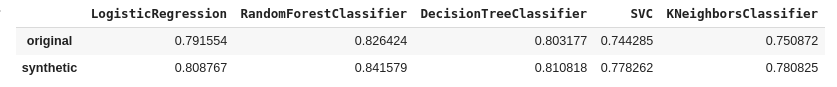

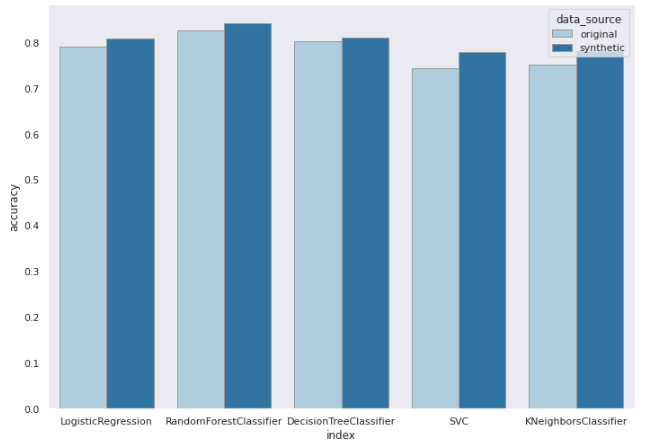

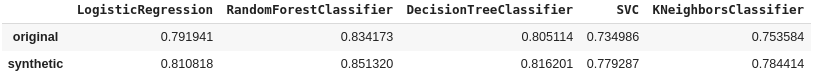

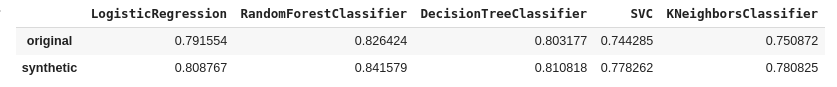

To validate the results of the original dataset, versus de new combined data (original + synthetic) we are going to train a set of classification models. As per the image below, the combination of real data and the augmentation of the black individuals synthetically have resulted in an overall increase of both the model’s accuracy (average of 2%) and f1_score (average of 1.4%), with the biggest improvement observed for SVM and KNNeighours models.

Average improvement of 2% accuracy for all the tested classifiers

Overall models accuracy for the original and combined datasets

Overall models f1_score for the original and combined datasets

Conclusion

At YData we are concerned not only with the existing bias in our day-to-day lives, but more importantly, with the bias present in most of the datasets that Data Scientists handle every single day. We all can do our parts in reducing what is a massive problem today, and we are excited to show how synthetic data can help you out. Improve your model’s results while helping your business to become fairer.

There are a ton of exciting applications for synthetic data, from reducing your organization’s privacy debt, help your data science teams to move faster, and augment your data for DL and ML models training. We’re looking forward to hearing from you some use cases that you’re struggling with, so feel free to reach out at hello@ydata.ai

Fabiana Clemente, Chief Data Officer at YData.