Synthetic data bootstrap

In the dynamic landscape of organizations high-quality data is a requirement for the development of many solutions - from software testing and validation all the way to Artificial Intelligence (AI) initiatives. In fact, the latest demand for a fast adoption of Large Language Models (LLM) have shown us that to achieve remarkable performance, we need not just any data, but vast and diverse datasets that capture the complexities of human language, communications and behaviors in general.

This awakening underscores the critical role that data plays in the AI ecosystem. It's not just about having data; it's about having the right kind of data in the right quantities. This realization has prompted a shift in how we perceive and prioritize data, leading to a greater emphasis on data collection, curation, management strategies and even - data generation!

However getting the right data at the right time is not always easy and the challenges vary - from data scarcity, privacy concerns to limited resources all of the above hinder the pursuit for the development of better solutions. And this is where synthetic data comes into play!

Synthetic data generation offers a multitude of benefits across various industries, from mitigating privacy concerns to enhancing AI model performance. By creating artificial data that mimics real-world scenarios, synthetic data helps overcome data scarcity issues, enabling more robust and diverse training datasets for AI models. Nevertheless, there are several types and ways to generate synthetic data, and all of them have their own benefits and applications:

- Data bootstrap or fake data: Generated from rules or prior business knowledge, bootstrap synthetic data allow you to quickly create a dataset even if you don’t have one. This method is useful for generating data for systems validations, or just to quickly create mock data that is shareable and that provides a glimpse on the data behavior without being too accurate.

- Generative models: When high-fidelity and utility is the requirement, either for privacy-preserving purposes or even for augmentation and AI enhancement, generative models are the way to go. Generative models are able to learn even the most complex underlying patterns of your data, being the most effective and flexible to generate more data without losing the original context.

- Simulations: Synthetic data generated through simulations is a powerful technique that creates artificial data by simulating real-world processes or scenarios. This method is particularly useful in fields such as autonomous vehicles, robotics, and physics-based simulations, where generating real data can be costly, time-consuming, or impractical.

- It can be achieved, for example, through conditional data generation.

Because raw data is not always available, or because sometimes we don’t have the complete awareness regarding the complex behaviors that certain systems might hold, the unavailability of data shouldn’t be a bottleneck to quickstart - enters data bootstrapping, a way to enhance your organization data initiatives even if real data is not yet available!

Understanding Data Bootstrapping

At its core, data bootstrapping is the process of generating synthetic data based on predefined rules and patterns. Unlike AI-based data generation methods that rely on actual data samples, data bootstrap utilizes algorithms and models to create new data points. This approach is particularly useful in scenarios where access to real data is not an option or simply with an access that is too restrictive.

The role of fake data & applications

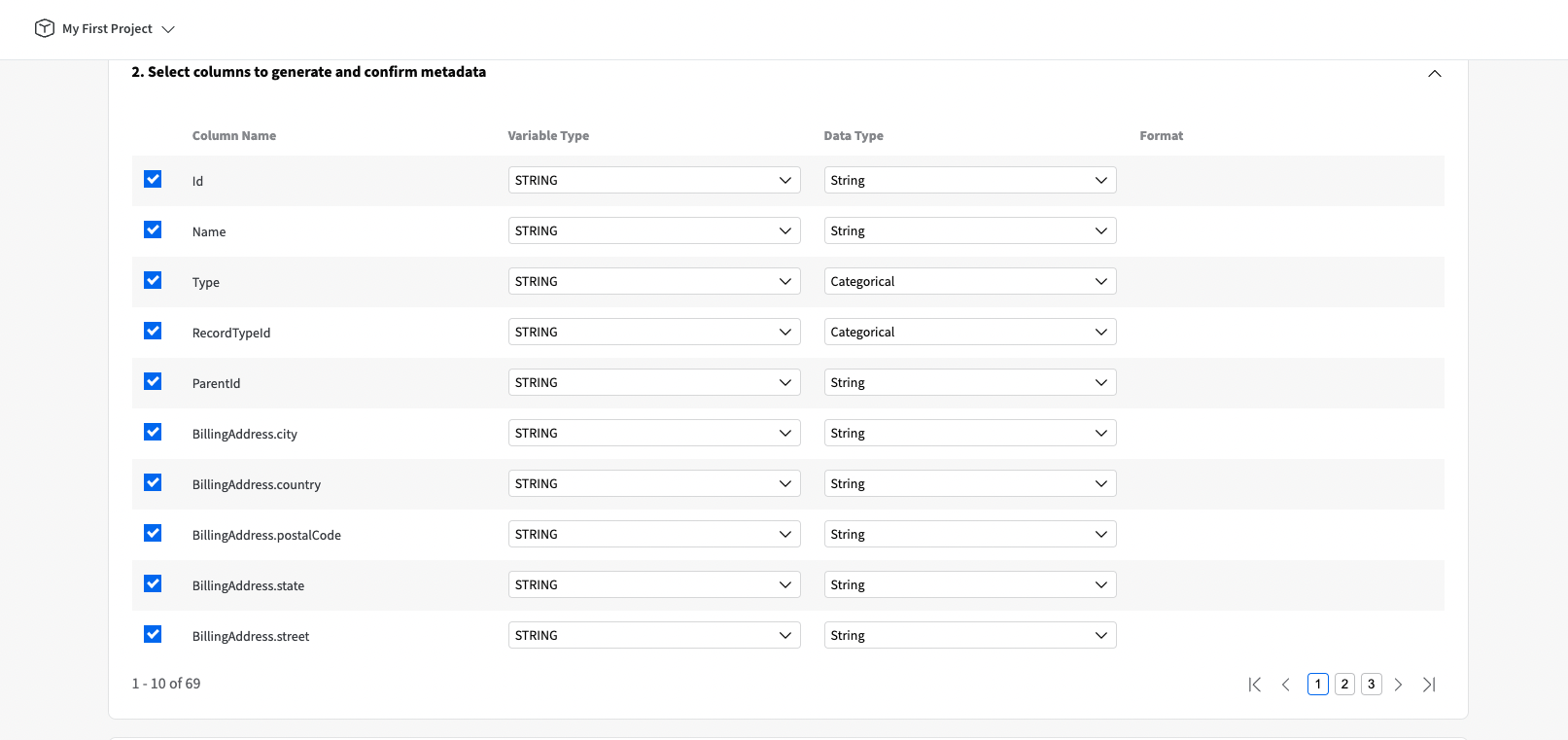

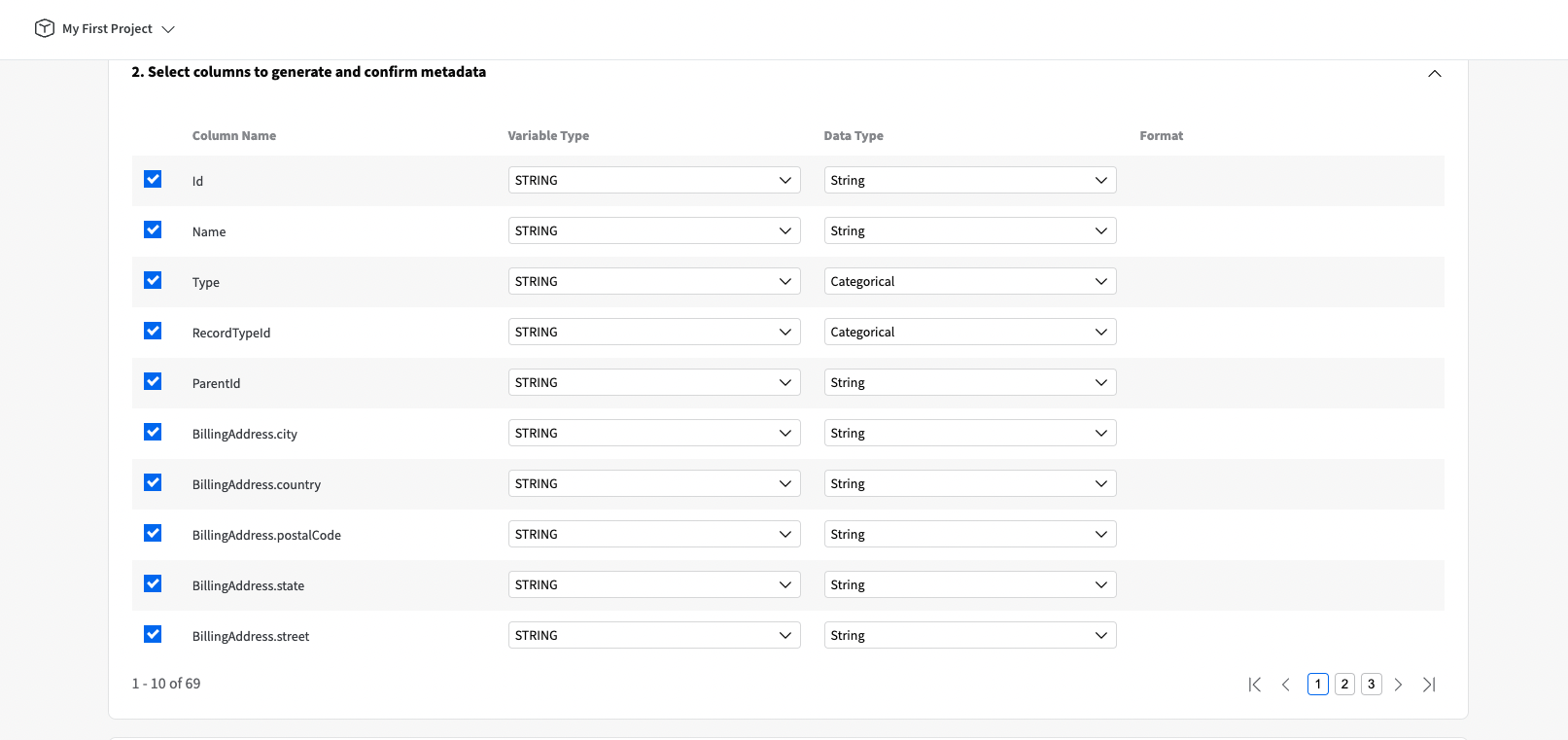

Central to the concept of data bootstrap is the fake data generation based on rules and known distributions. YData Fabric introduces the FakerSynthesizer that allows data practitioners to generate complex table schemas with few inputs - from a dummy table with random values all the way to a dataset with pre-defined distributions, missing values, names, addresses, etc. The ease to use code interface, makes it a valuable asset in the process of data generation!

Data bootstrap has a wide range of applications across industries, as it can be used for various purposes such as testing, development, and demonstration. It does not represent real or actual data and is often used in situations where using real data is not feasible or appropriate.

Some common use cases for data bootstrap and fake data include:

- Software Testing: fake data is used to test software applications and systems during development to ensure they function correctly under different scenarios.

- Database Development: Dummy data is used to populate databases during development or testing to simulate real-world conditions without using actual sensitive or confidential information.

- Data Visualization: Dummy data is used in data visualization projects to create mock datasets for demonstrating the capabilities of visualization tools and techniques.

- Prototyping: Dummy data is used in prototyping new products or features to provide a realistic representation of how the final product will look and behave.

- Training and Education: Dummy data is used in training and educational programs to teach concepts and techniques without using real data.

Overall, Data Bootstrap plays a crucial role in various fields by providing a safe and effective way to generate useful data even though there is not real data available or accessible! And the most exciting part? You can have a test of the benefits and capabilities of Data Bootstrap and dummy data in YData Fabric. Register in Fabric Community and give it a go!

It serves as a critical tool not only in software development, populating databases and prototyping new products.

Beyond these immediate applications, fake data also holds significant potential for advancing AI initiatives. In AI development, where large, diverse datasets are essential, fake data can be used to fill-in some of the gaps in training data, unlocking the development of these systems even though real data is not yet available.